Teaching ZFS about time

ZFS is a robust file system, in large part thanks to its copy-on-write design. Problems can still show up: flaky cables, dying drives, or the occasional cosmic ray flipping a bit. For exactly those situations ZFS has a feature called scrub. Scrub walks every used block starting from the uberblock and compares the stored checksum against the data on disk.

The catch is that scrubs are expensive, especially when the pool holds petabytes. An admin who hit a power-supply problem or a sudden shutdown usually wants to scrub the data written around that event, not the entire pool. That is the goal here: teach ZFS something about wall-clock time.

Internally, ZFS only thinks in transaction group (TXG) numbers.

Administrators, inconveniently, think in dates.

A TXG is just a uint64_t that goes up with every transaction, and there has never been a clean way to map between the two.

The goal here is not to record a timestamp for every transaction group. That would waste both space and IOPS for almost no benefit. A rough estimate is fine. If a user asks for an event "five months ago, on Friday", we can hand back a TXG that lands somewhere on Friday — or, at worst, a few TXGs earlier. A small over-scrub is a feature, not a bug. The user still asked to scrub Friday, and they still avoid scrubbing the two years of data that have nothing to do with the event.

The first thing to try is the timestamp that already lives in the uberblock:

$ sudo zdb -u zroot

Uberblock:

magic = 0000000000bab10c

version = 5000

txg = 53830995

guid_sum = 5886975331706583016

timestamp = 1778047065 UTC = Wed May 6 07:57:45 2026

That works, until it doesn't. A pool only keeps around 128 uberblocks, and on a busy pool those 128 timestamps can all sit within a few minutes of each other. This simply won't work for a week or month case.

The next idea was to use zpool history, which already records timestamps for admin events.

That gets us a longer timeline, but the events are whatever the admin happened to do.

Building a feature on "hopefully someone ran zfs snapshot near the right time" is not a great foundation.

So we needed something dedicated.

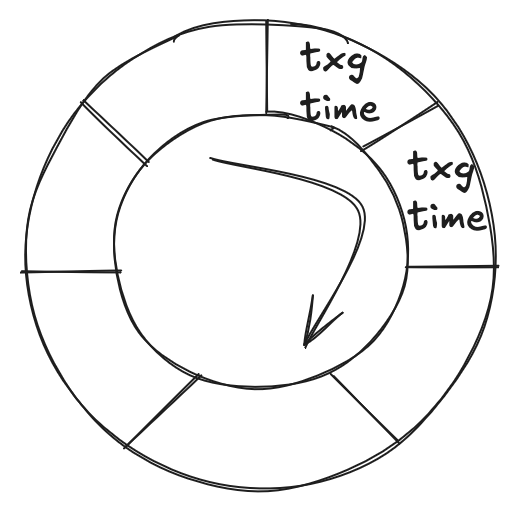

The structure we ended up adding is a circular round-robin database (crrd), stored in the MOS, that maps timestamps (UTC) to TXGs. The database has a fixed capacity; once it fills up, the oldest record is overwritten by the newest. This is the round-robin buffer from your algorithms 101 course, dressed up for on-disk life.

So we can build a structure that represents this:

typedef struct {

uint64_t rrdd_time;

uint64_t rrdd_txg;

} rrd_data_t;

typedef struct {

uint64_t rrd_head; /* head (beginning) */

uint64_t rrd_tail; /* tail (end) */

uint64_t rrd_length;

rrd_data_t rrd_entries[RRD_MAX_ENTRIES];

} rrd_t;

Each record is a time and txg pair, and the rest of the struct just tracks the head, tail, and current length of the ring.

As a side note: we did not strictly need to write head and tail to disk.

The timestamps are monotonic, so on load we could scan the entries, find the wrap point, and recover head and tail ourselves.

But I guess our algorithms 101 professor would be prouder of us this way.

Now for the tricky part. A single crrd does not get us very far. Say we record one entry every ten minutes and keep 256 of them: that is about a day and a half of coverage. Better than the uberblock case. Still useless if the user wants "last Tuesday".

The obvious move is "just make the buffer bigger".

And here we made one decision that matters.

The crrd does not live in the MOS as one ZAP entry per record.

The whole ring — rrd_head, rrd_tail, rrd_length, and all 256 (time, txg) pairs — is packed into a single binary blob and written with zap_update, using an integer size of 8 and a count of sizeof(rrd_t) / 8.

That means the first limit we hit is not the micro ZAP block size.

It is the per-entry value limit, ZAP_MAXVALUELEN, which was a hard 8 KiB at the time:

#define ZAP_MAXVALUELEN (1024 * 8)

With a 24-byte header (rrd_head, rrd_tail, rrd_length) and 16-byte (time, txg) records, the math is simple:

(8192 - 24) / 16 = 510

We found this out the hard way.

The first version of the code set the per-ring capacity to 512, and zap_update came back with E2BIG from fzap_checksize:

if (integer_size * num_integers > ZAP_MAXVALUELEN)

return (SET_ERROR(E2BIG));

This was before large_microzap existed, so there was no way around it — ZAP_MAXVALUELEN was the wall.

The buffer settled at 256 entries: a nice round number, well under the 510 limit.

One ring isn't enough. Sample every ten minutes and we cover about a day and a half — nothing older. Sample once a month and we cover decades — but we lose the day inside the month. So we keep three rings, each with its own resolution and its own ZAP entry:

- minutes — sampled every

zfs_spa_flush_txg_timeseconds (default600, i.e. ten minutes), 256 entries, about 1.5 days of detailed coverage, - days — one entry per day, 256 entries, about 8 months,

- months — one entry per month, 256 entries, about 21 years.

When a TXG falls off the minute ring it has already been copied into the daily ring; same again from daily to monthly. Three separate ZAP entries, each safely under the 8 KiB value limit, and together they cover the last two decades while keeping enough resolution for recent events.

With the database in place, zpool scrub learned two new flags: -S for the start date and -E for the end date.

Both accept "YYYY-MM-DD" or "YYYY-MM-DD HH:MM" in local time.

The end date is rounded up: scrubbing a bit more than asked is fine, scrubbing less is not.

# Scrub everything written in a specific window, and wait for it.

$ zpool scrub -w -S "2026-04-01 14:30" -E "2026-04-01 18:00" tank

# Day-only form is fine too.

$ zpool scrub -S "2026-03-15" -E "2026-03-20" tank

# Open-ended start: scrub everything up to a cutoff.

$ zpool scrub -E "2026-04-01 00:00" tank

Two caveats. The database only knows about TXGs recorded after the feature was enabled — anything older falls back to a full scrub. And if you set the clock backwards by hand, the database gets confused, since we trust the clock at sample time.

This work went in as openzfs/zfs#16853, merged in commit 4ad33a2, and shipped in OpenZFS 2.4.0.

ZFS still does not really know what time it is. It just keeps a small, lossy notebook of when interesting TXGs went by. For the "scrub the bit around last Friday" problem, that turns out to be exactly enough.

This development effort was sponsored by Wasabi Technologies, Inc. and Klara, Inc.